22

Jun

Azure CNI with Cilium: Most scalable and performant container networking in the Cloud

In December 2022, we announced our partnership with Isovalent to bring next generation extended Berkeley Packet Filter (eBPF) dataplane for cloud-native applications in Microsoft Azure and it was revealed that the next generation of Azure Container Network Interface (CNI) dataplane would be powered by eBPF and Cilium.

Today, we are thrilled to announce the general availability of Azure CNI powered by Cilium. Azure CNI powered by Cilium is a next-generation networking platform that combines two powerful technologies: Azure CNI for scalable and flexible Pod networking control, integrated with the Azure Virtual Network stack, and Cilium, an open-source project that utilizes eBPF-powered data plane for networking, security, and observability in Kubernetes. Azure CNI powered by Cilium takes advantage of Cilium’s direct routing mode inside guest virtual machines and combines it with the Azure native routing inside the Azure network, enabling improved network performance for workloads deployed in Azure Kubernetes Service (AKS) clusters, and with inbuilt support for enforcing networking security.

In this blog, we will delve further into the performance and scalability results achieved through this powerful networking offering in Azure Kubernetes Service.

Performance and scale results

Performance tests are conducted in AKS clusters in overlay mode to analyze system behavior and evaluate performance under heavy load conditions. These tests simulate scenarios where the cluster is subjected to high levels of resource utilization, such as large concurrent requests or high workloads. The objective is to measure various performance metrics like response times, throughput, scalability, and resource utilization to understand the cluster’s behavior and identify any performance bottlenecks.

Service routing latency

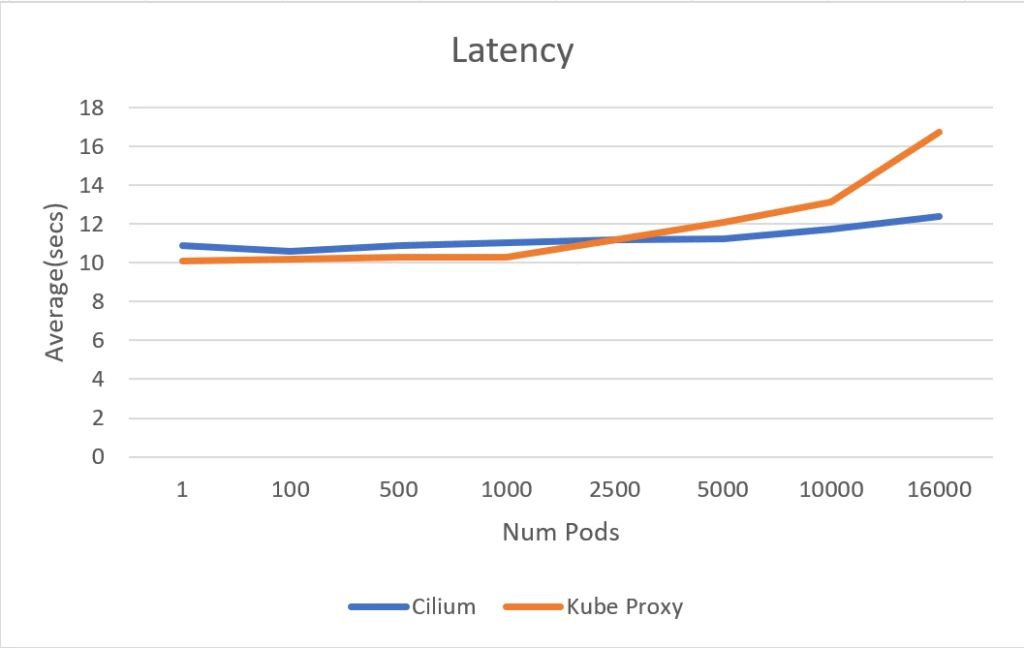

It has been observed that the service routing latency of Azure CNI powered by Cilium and kube-proxy initially exhibit similar performance until the number of pods reaches 5000. Beyond this threshold, the latency for the service routing for kube-proxy based cluster starts to increase, while it maintains a consistent latency level for Cilium based clusters.

Notably, when scaling up to 16,000 pods, the Azure CNI powered by Cilium cluster demonstrates a significant improvement with a 30 percent reduction in service routing latency compared to the kube-proxy cluster. These results reconfirm that eBPF based service routing performs better at scale compared to IPTables based service routing used by kube-proxy.

Service routing latency in seconds

The measurements were taken using the apachebench, which is commonly used for benchmarking and load testing web servers.

Scale test performance

The scale test was conducted in an Azure CNI powered by Cilium Azure Kubernetes Service cluster, utilizing the Standard D4 v3 SKU nodepool (16 GB mem, 4 vCPU). The purpose of the test was to evaluate the performance of the cluster under high scale conditions. The test focused on capturing the central processing unit (CPU) and memory usage of the nodes, as well as monitoring the load on the API server and Cilium.

The test encompassed three distinct scenarios, each designed to assess different aspects of the cluster’s performance under varying conditions.

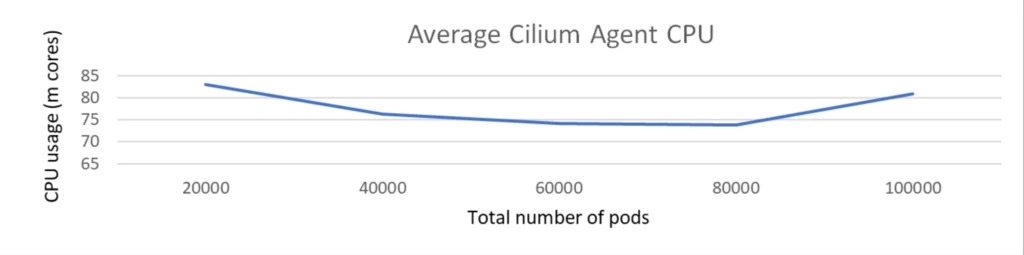

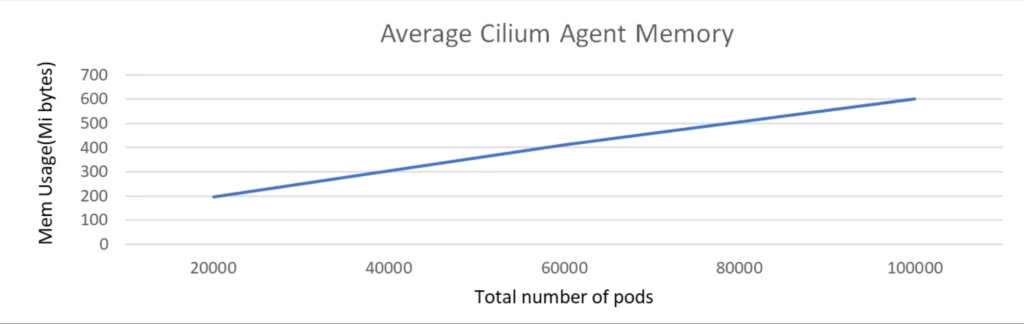

Scale test with 100k pods with no network policy

The scale test was executed with a cluster comprising 1k nodes and a total of 100k pods. The test was conducted without any network policies and Kubernetes services deployed.

During the scale test, as the number of pods increased from 20K to 100K, the CPU usage of the Cilium agent remained consistently low, not exceeding 100 milli cores and memory is around 500 MiB.

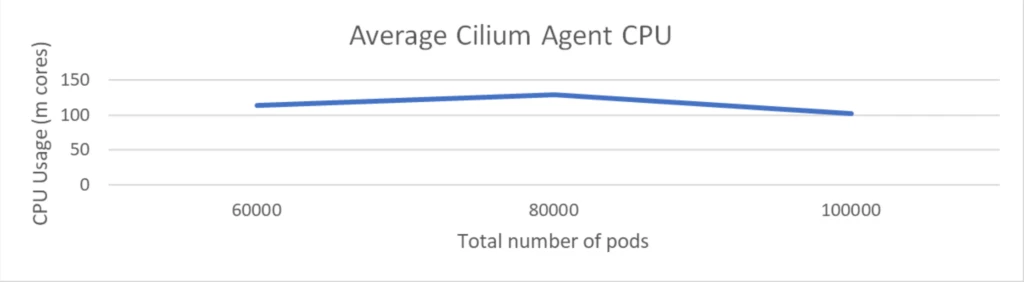

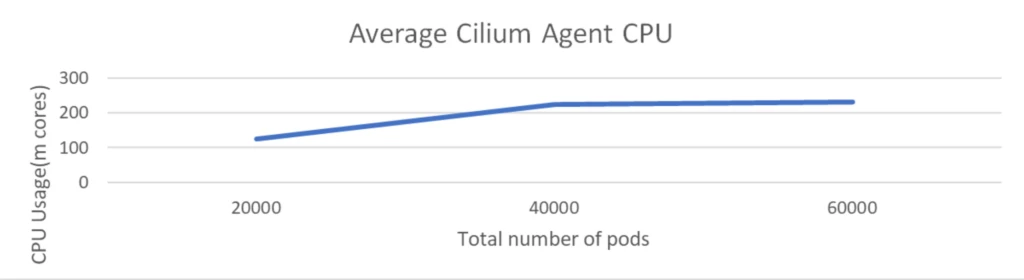

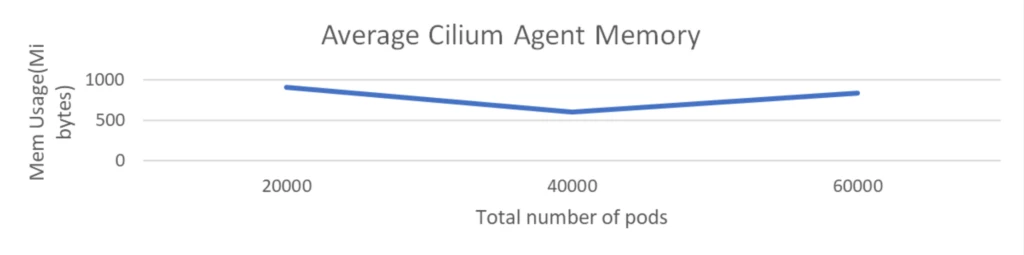

Scale test with 100k pods with 2k network policies

The scale test was executed with a cluster comprising 1K nodes and a total of 100K pods. The test involved the deployment of 2K network policies but did not include any Kubernetes services.

The CPU usage of the Cilium agent remained under 150 milli cores and memory is around 1 GiB. This demonstrated that Cilium maintained low overhead even though the number of network policies got doubled.

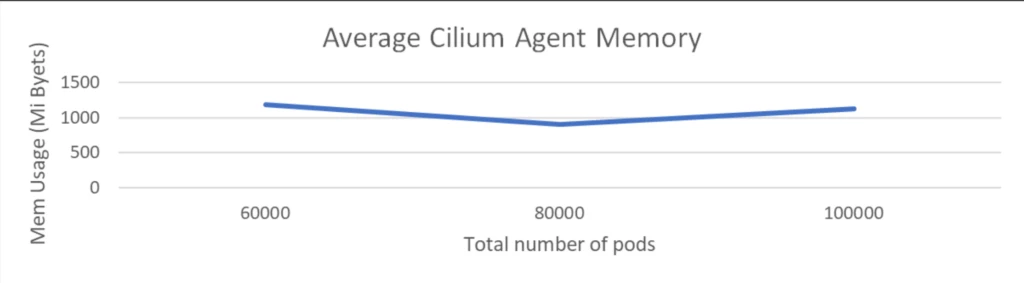

Scale test with 1k services with 60k pods backend and 2k network policies

This test is executed with 1K nodes and 60K pods, accompanied by 2K network policies and 1K services, each having 60 pods associated with it.

The CPU usage of the Cilium agent remained at around 200 milli cores and memory remains at around 1 GiB. This demonstrates that Cilium continues to maintain low overhead even when large number of services got deployed and as we have seen previously service routing via eBPF provides significant latency gains for applications and it is nice to see that is achieved with very low overhead at infra layer.

Get started with Azure CNI powered by Cilium

To wrap up, as evident from above results, Azure CNI with eBPF dataplane of Cilium is most performant and scales much better with nodes, pods, services, and network policies while keeping overhead low. This product offering is now generally available in Azure Kubernetes Service (AKS) and works with both Overlay and VNET mode for CNI. We are excited to invite you to try Azure CNI powered by Cilium and experience the benefits in your AKS environment.

To get started today, visit the documentation available on Azure CNI powered by Cilium.

The post Azure CNI with Cilium: Most scalable and performant container networking in the Cloud appeared first on Azure Blog.